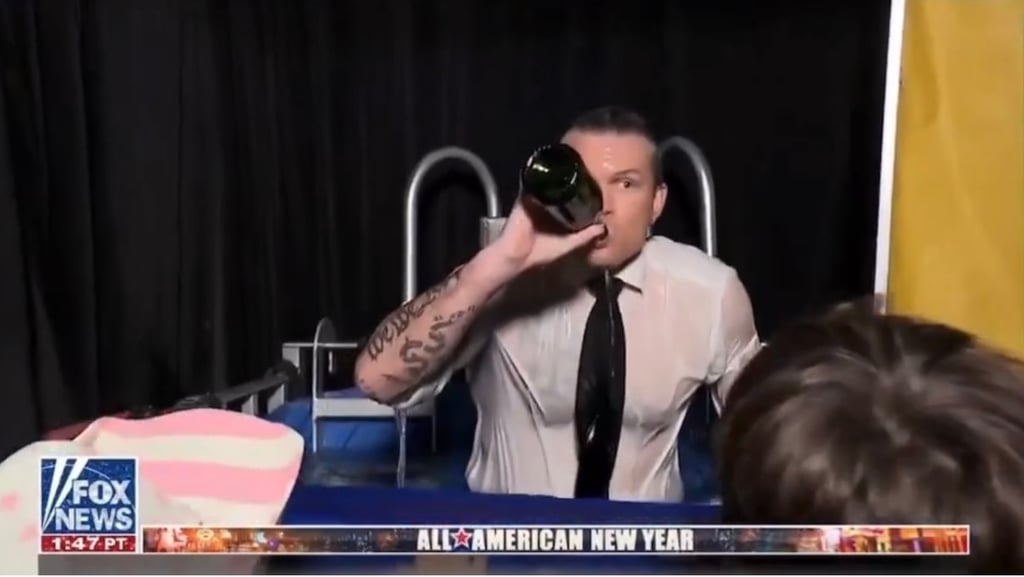

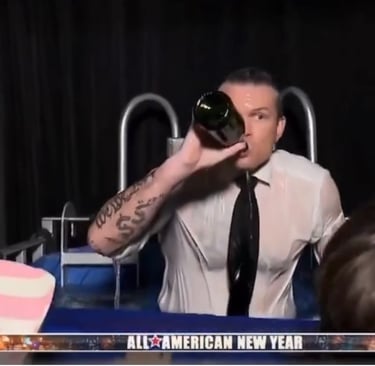

Raging Alcoholic Lil' Petey Kegsbreath makes THREATS!

The Pentagon just gave an AI company a wild ultimatum: hand over unrestricted access to its model or get branded a national security risk. Critics say it’s policy chaos…. threatening to blacklist the same company the Defense Department is simultaneously trying to force into service.

This whole thing feels like the Pentagon trying to yell two completely different things at the same time and hoping nobody notices. Defense Secretary Pete Hegseth basically told Anthropic’s CEO, Dario Amodei, “Give us full, unrestricted access to your Claude AI model by Friday… or we’ll label you a national security risk.” And that label is no joke. It’s usually reserved for foreign companies tied to adversaries, and it could freeze Anthropic out of working with government contractors.

But here’s the twist that’s making lawyers and policy folks do a double take 🤨. At the same time the Pentagon is threatening to brand Anthropic a supply-chain threat, it’s also floating the idea of using the Defense Production Act… that Cold War-era emergency law… to force the company to work with the military and scrap its ethical guardrails. Those guardrails include not using the AI to surveil Americans or power autonomous weapons.

So on one hand: “You’re too risky to work with.”

On the other: “We’re going to force you to work with us.”

Even a former Trump White House AI adviser, Dean Ball, called that logic “incoherent” and basically insane. You can’t tell every defense contractor not to touch Anthropic’s tech while also saying the Defense Department must use it. That’s not strategy. That’s policy whiplash.

This all traces back to a January operation targeting Nicolás Maduro, where the U.S. military reportedly used Claude. When Anthropic asked questions about how its model was being used, Pentagon officials weren’t thrilled. Fast forward to now… and we’ve got a standoff.

Anthropic, along with OpenAI, Google, and xAI, already has a $200 million Pentagon contract. But Hegseth has made it clear he wants AI “without ideological constraints.” Translation: fewer limits on how the military can deploy it. Critics hear that and immediately think surveillance and autonomous weapons.

Legal experts say this is about as heavy-handed as it gets. Some conservatives who hated Biden’s use of the Defense Production Act are now uneasy about expanding executive power even further. Senators Elizabeth Warren and Andy Kim are warning this could wreck bipartisan support for the law. Meanwhile, free-market policy folks on the right are also saying, “Slow down.”

The Pentagon insists this isn’t about mass surveillance or killer robots 🤖. Other AI companies are reportedly cooperating in classified settings. But Anthropic hasn’t budged on its red lines, and it’s not clear whether it will cave, sue, or dig in.

At its core, this is a bigger fight about who controls advanced AI in the U.S.… private companies with ethical guardrails, or the national security state demanding full access. When you’re threatening to both blacklist and commandeer the same company in the same breath, it suggests something deeper than routine contract drama. It’s a stress test for how America handles cutting-edge tech when warfighters, lawyers, and Silicon Valley values all collide in the same room.